How Generative AI Is Rewriting Architectural Representation

1. A Crisis or Reinvention of Representation?

When the Image Stops Following Design and Starts Producing It

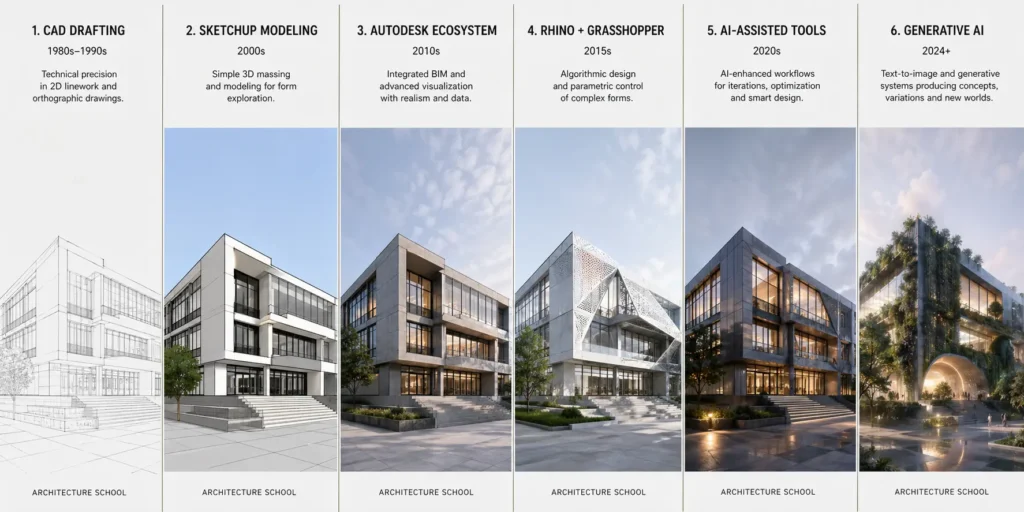

Architectural representation has always been more than a technical act. It has been ideology in graphic form. The perspectival drawing staged power. The axonometric reorganized systems thinking. The digital render turned atmosphere into persuasion. Each shift altered not only how architecture was shown, but how it was conceived.

Generative AI introduces a deeper rupture. This is not another rendering software upgrade. It may be a reconfiguration of representation itself.

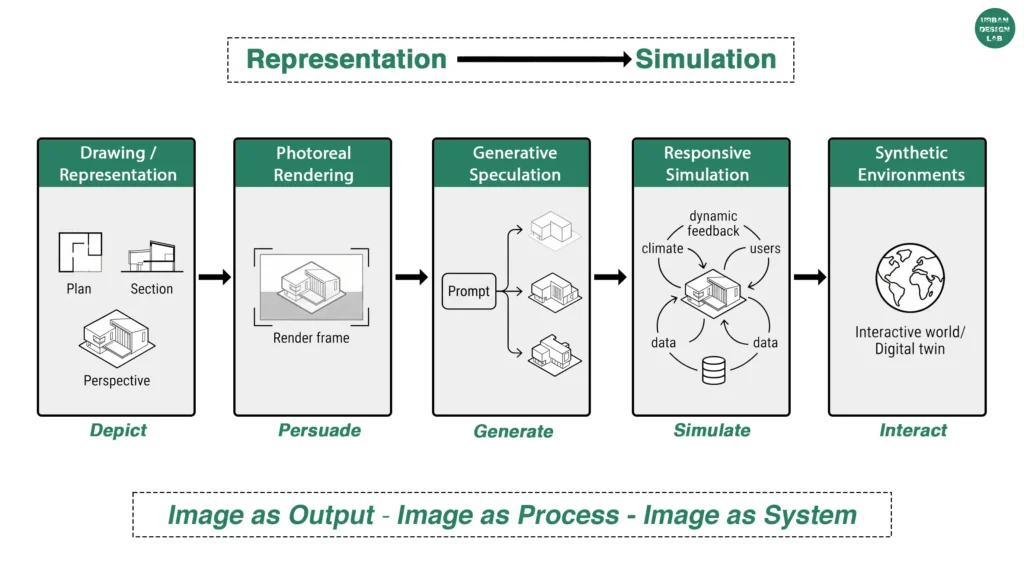

The shift is subtle but profound. Traditional rendering translated a designed proposition into image. AI systems increasingly collapse that sequence. The image can precede the proposition. Conceptual exploration, atmospheric testing, formal mutation, material speculation, even urban futures can emerge through synthetic visual generation before a project is coherently authored in conventional disciplinary terms.

That inversion matters.

For decades, architectural rendering pursued fidelity: better lighting, better textures, better realism. Today the frontier is no longer realism. It is cognition. The question has moved from “How quickly can we visualize?” to “What happens when representation starts participating in thought?”

That is why current debates around OpenAI image systems, NVIDIA’s generative ecosystems, and Autodesk’s AI-assisted workflows feel larger than software discourse. They expose a disciplinary anxiety: if representation has historically been architecture’s medium of projection, what happens when projection becomes generative intelligence?

Perhaps rendering is not ending. Perhaps it is mutating into something less stable, and far more consequential.

2. Rendering as Authorship

Who Owns the Image When Intelligence Is Distributed?

Architectural imagery has always concealed labor. Behind the atmospheric competition rendering lies software expertise, aesthetic precedent, image compositing, and often outsourced visualization economies.

AI makes this hidden authorship impossible to ignore. When a prompt generates a seductive urban vision trained on millions of prior images, who authors that image? The architect? The model? The dataset? The aesthetic histories embedded in the machine? This is not merely copyright anxiety. It is a disciplinary question.

Architectural rendering has long performed authorship through singularity, the signature visual language of offices from Zaha Hadid to Bjarke Ingels often functioning as design identity. Generative systems complicate that. Style becomes statistically reproducible. Visual originality starts to look suspiciously like prompt variation.

That destabilizes authorship, but it also reveals how much contemporary rendering was already operating through aesthetic repetition.

The politics here matter. If AI amplifies dominant visual cultures, hyper-polished, neo-organic, endlessly atmospheric urban futures, then the problem is not artificial creativity but algorithmic homogenization.

Yet another possibility emerges. Distributed authorship may not weaken architectural imagination, but pluralize it. The author shifts from solitary form-maker to curator, editor, discriminator. Perhaps the future architectural image is less composed than orchestrated.

3. Prompting vs Designing

Is Prompting a Design Act or Merely an Interface Illusion?

Prompting is often framed either as revolutionary design medium or lazy shortcut. Both positions are shallow. Prompting is neither magic nor fraud. It is emerging as a peculiar hybrid literacy. Good prompting increasingly resembles diagramming: sequencing variables, defining relations, setting constraints, iterating outcomes. In this sense, it echoes parametric logic, except through language.

Yet prompting can also simulate design intelligence while bypassing it. This is the disciplinary tension. An evocative image of a climate-adaptive district can be produced in seconds. But is that urban thought, or aesthetic speculation masquerading as intelligence?

The answer depends on whether prompting remains image extraction or evolves into design interrogation. Some AI-native studios are already treating prompts less as outputs and more as conversational engines for conceptual divergence, almost like speculative sketchbooks at planetary scale. That is potentially radical. But there is equal risk of representation detached from tectonic responsibility, where architecture becomes moodboard urbanism. Prompting may become design only when linked back to judgment, iteration, geometry, systems, and consequences. Otherwise it remains visual rhetoric.

4. From Representation to Synthetic Speculation

When Images Stop Depicting Futures and Start Simulating Them

Perhaps the most radical shift is not photorealism automation, but the rise of synthetic speculation. Architectural images have historically represented possible futures. AI images increasingly perform them. This matters because speculative architecture has often been constrained by drawing labor.

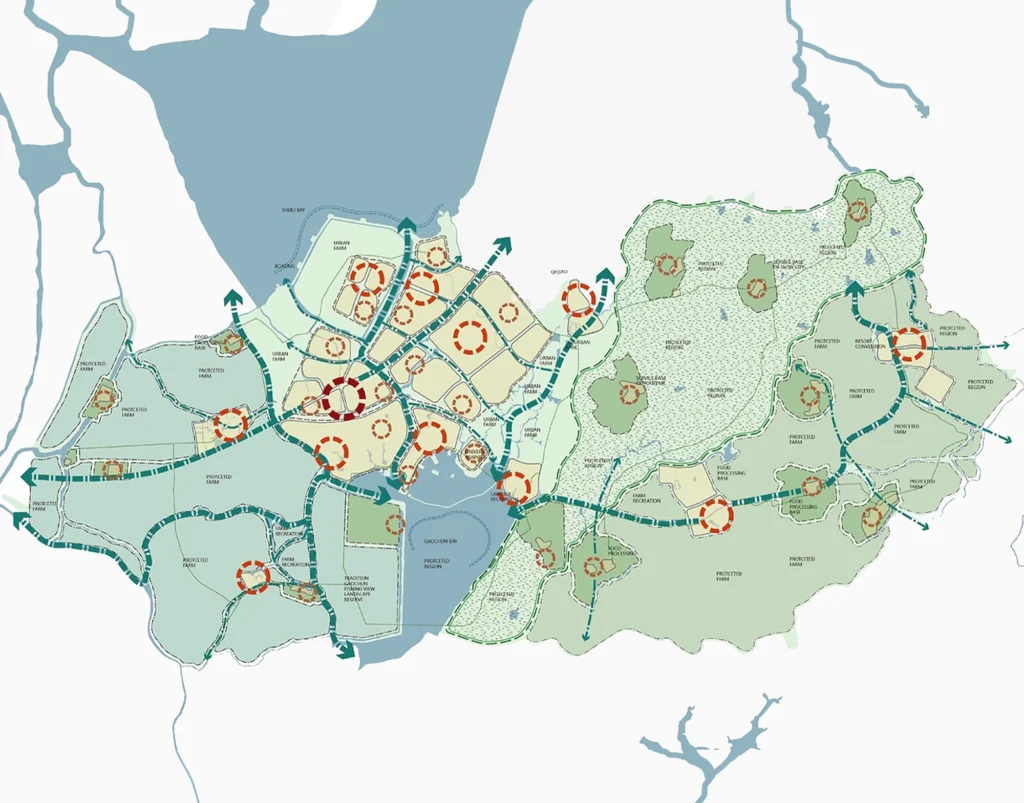

Now whole urban scenarios, post-carbon districts, impossible megastructures, ritual ecologies, climate migration settlements, can be iteratively generated as visual thought experiments. Representation begins behaving more like world-building. That is closer to cinema, gaming, and simulation culture than traditional rendering.

OpenAI’s multimodal image-video tools hint at this transition. Static renderings may evolve into responsive narrative environments. Not image as endpoint, but image as synthetic scenario. Architectural speculation may move from heroic manifesto drawings into dynamic simulation ecologies. And yet synthetic speculation cuts both ways. It can open radical imaginaries. It can also flood discourse with seductive unreality. The challenge becomes distinguishing speculative intelligence from synthetic spectacle. That may be the new critical literacy architecture schools will need to teach.

5. What AI Still Cannot Replace

Judgment Is Not an Image Problem

For all its visual fluency, AI remains profoundly weak where architecture matters most. It does not understand gravity as tectonic culture. It does not grasp ritual threshold, political context, material weathering, construction economies, social conflict. It can approximate them visually.

Approximation is not intelligence. Architecture is not image production. It is situated judgment. No generative system understands the civic ethics of demolition. Or why a sacred procession route matters. Or how a wall detail mediates climate. These are not rendering problems. They are epistemic ones. Material intelligence remains stubbornly resistant to prompt culture. So does critical responsibility. This is where techno-utopian narratives collapse.

The strongest argument for AI in architecture may not be that it replaces imagination, but that it exposes what imagination alone was never sufficient for. Because the harder problems were never visual. They were always political, material, spatial. And remain so.

6. End of Rendering or Birth of Something Else?

The Image Is Not Dying. Its Role Is Being Contested.

Predictions about the death of rendering miss the point. Rendering is not disappearing. Its monopoly is. For three decades the photoreal architectural image governed aspiration, persuasion, and often deception. Generative AI has unsettled that regime. Not because machines now make prettier images. Because the relationship between image and thought has become unstable. And instability is often where disciplines reinvent themselves. The real question is not whether AI will replace architectural representation. It is whether architecture can critically shape what representation becomes under AI.

That is a cultural project, not a software problem. The danger is obvious: synthetic spectacle, aesthetic homogenization, image inflation. But so is the possibility: richer speculation, expanded conceptual range, new representational intelligence.

Perhaps the render is not ending. Perhaps it is losing its innocence. And perhaps that is overdue. Because the future of architectural representation may belong neither to photoreal persuasion nor automated fantasy, but to a more contested medium where image, simulation, authorship and critique collide.

Related articles

Remembering Frank O. Gehry: The Architect Who Redrew Skylines

Architecture Professional Degree Delisting: Explained

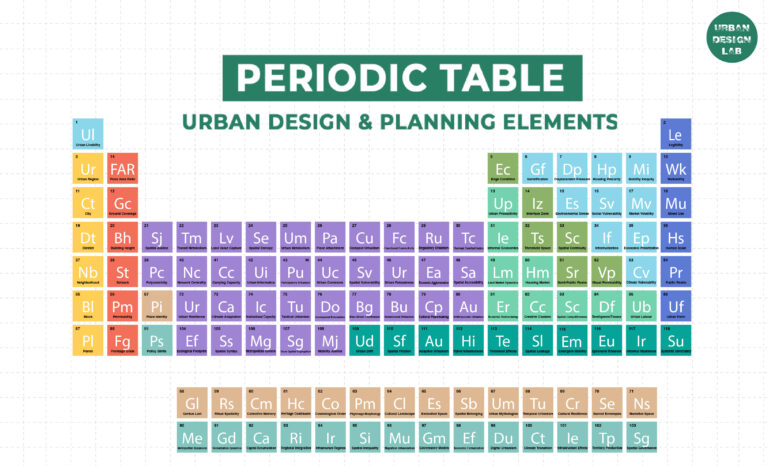

Periodic Table for Urban Design and Planning Elements

History of Urban Planning in India

UDL GIS

MASTERCLASS

GIS Made Easy – Learn to Map, Analyse, and Transform Urban Futures

Session Dates

6th-10th July, 2026

Curating the best graduate thesis projects globally!

Learn to Design Portfolios, Publications,

and Presentation Boards

Free E-Book

From thesis to Portfolio

A Guide to Convert Academic Work into a Professional Portfolio”

Urban Design Lab

Be the part of our Network

Stay updated on workshops, design tools, and calls for collaboration

Recent Posts

- Article Posted:

- Article Posted:

- Article Posted:

- Article Posted:

- Article Posted:

- Article Posted:

- Article Posted:

- Article Posted:

- Article Posted:

- Article Posted:

- Article Posted:

- Article Posted:

- Article Posted:

- Article Posted:

- Article Posted:

Sign up for our Newsletter

“Let’s explore the new avenues of Urban environment together “